Welcome to HPC@KIT!

Choose your language. Then swipe left, click the button in the lower right corner or navigate right on your keyboard to begin. Press the Escape key to navigate.

Welcome to the data center building at KIT Campus North!

Click on one of the links on the illustration to get more information.

Supercomputers

KIT and its predecessor organisations have operated more than 30 Tier 2 and Tier 3 supercomputers over the last three decades. The fastest system currently installed is called "Forschungshochleistungsrechner II" (ForHLR II).

ForHLR II will be replaced by the much more powerful HoreKa system in 2021.

Scroll or swipe down for more information about ForHLR 2 and HoreKa.

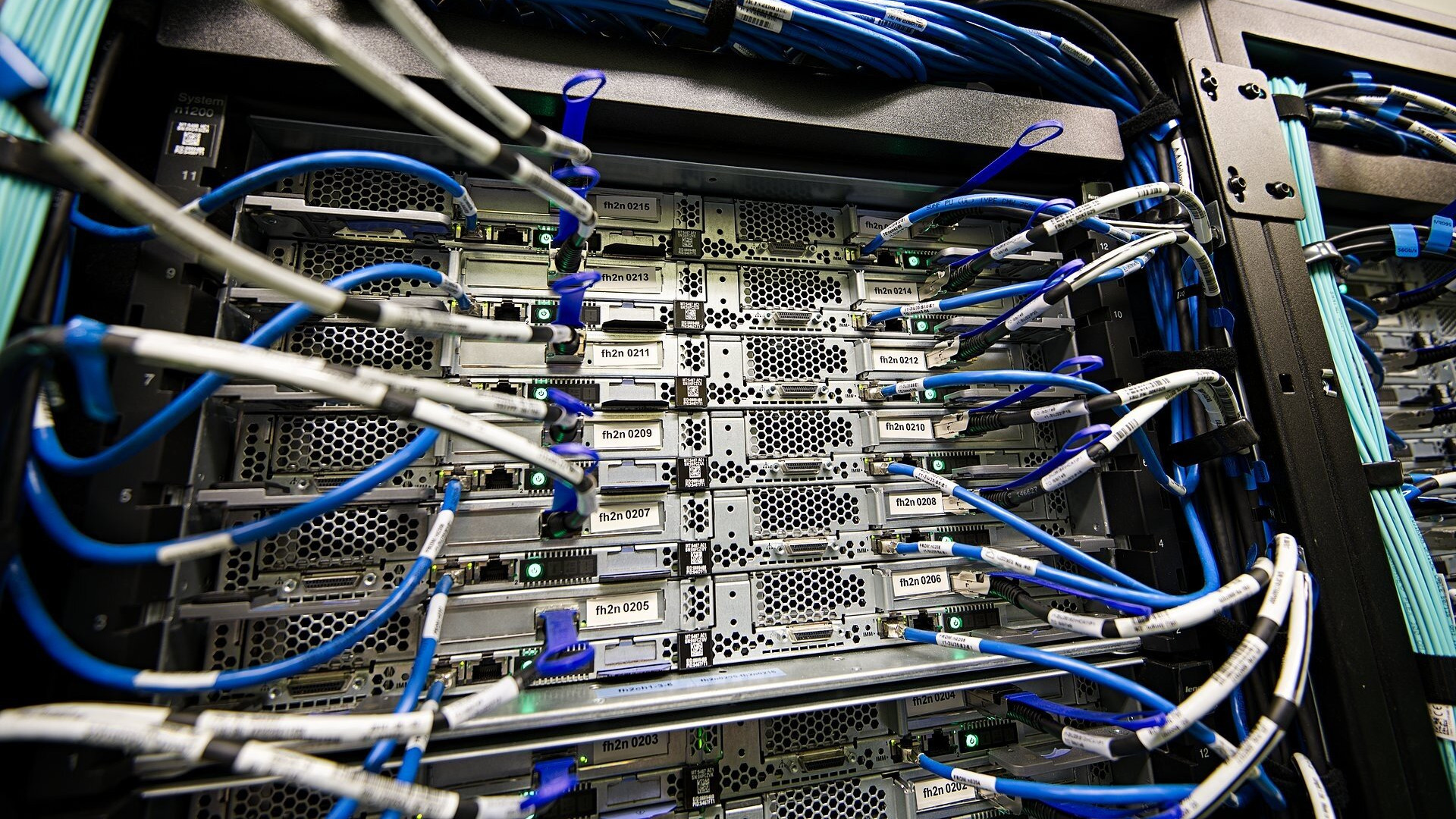

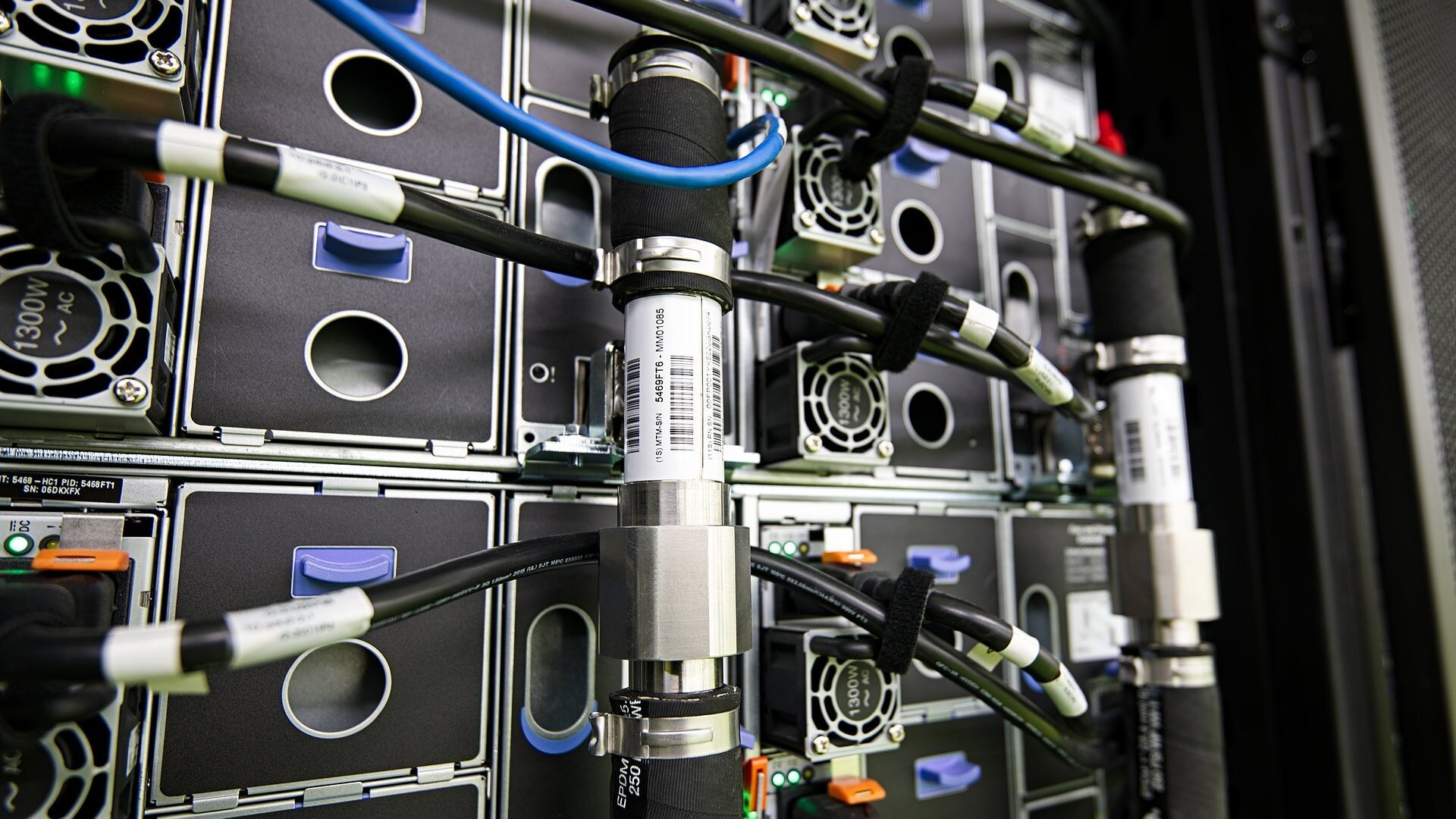

The "Forschungshochleistungsrechner II" is a hot-water cooled Petaflop system installed in 2016.

The system consists of 1,186 Intel Xeon "Haswell" processor nodes with 24,000 CPU cores and 74 TB of memory.

The processor nodes are organized in 96 node chassis containing 12 nodes each. All nodes share the same power supply infrastructure to increase efficiency.

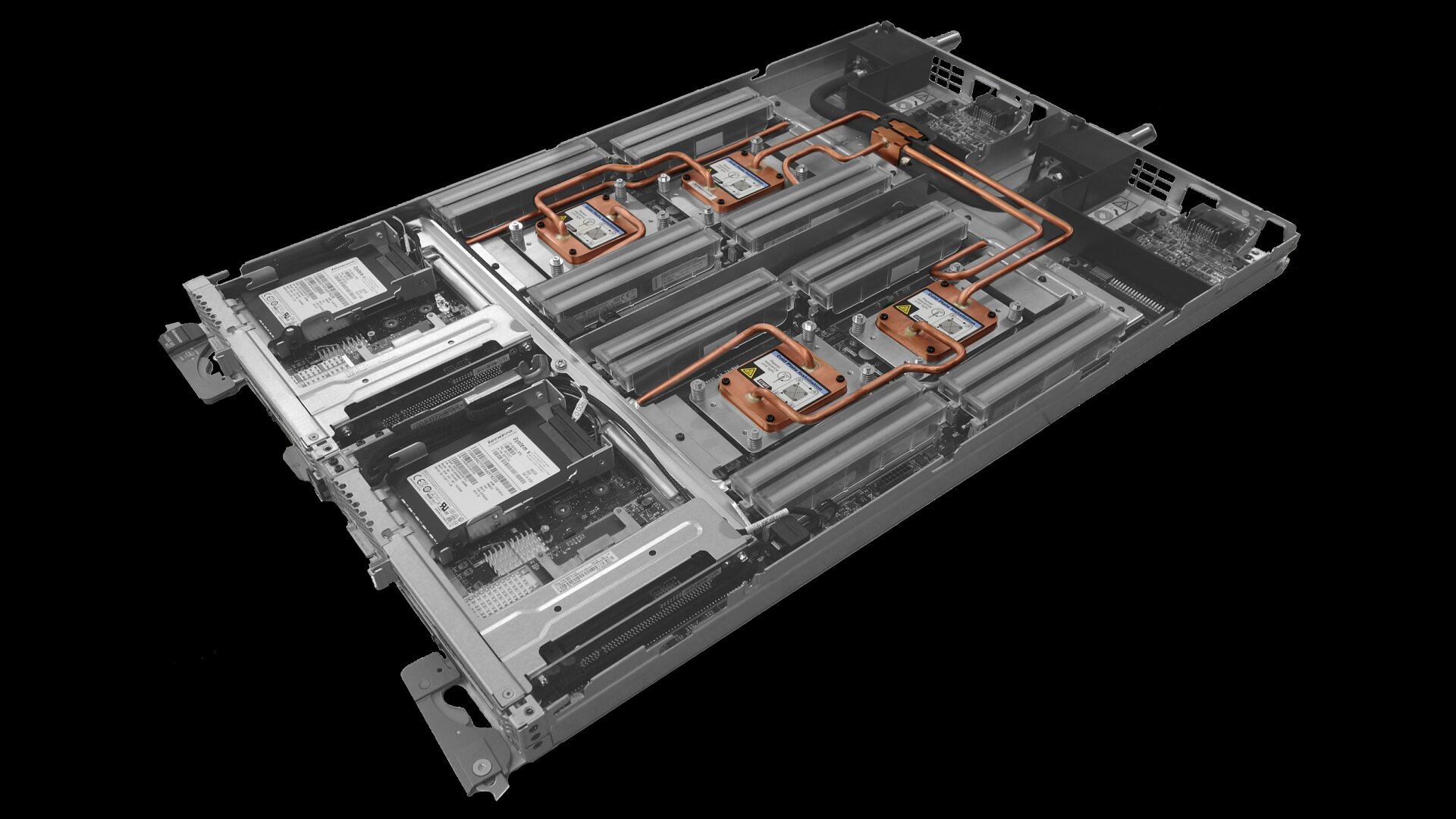

The basic building block of the system is a server blade containing two processor nodes. Each processor node has two Intel Xeon "Haswell" processors, 64 GB of memory and a connection to the high-performance network interconnect.

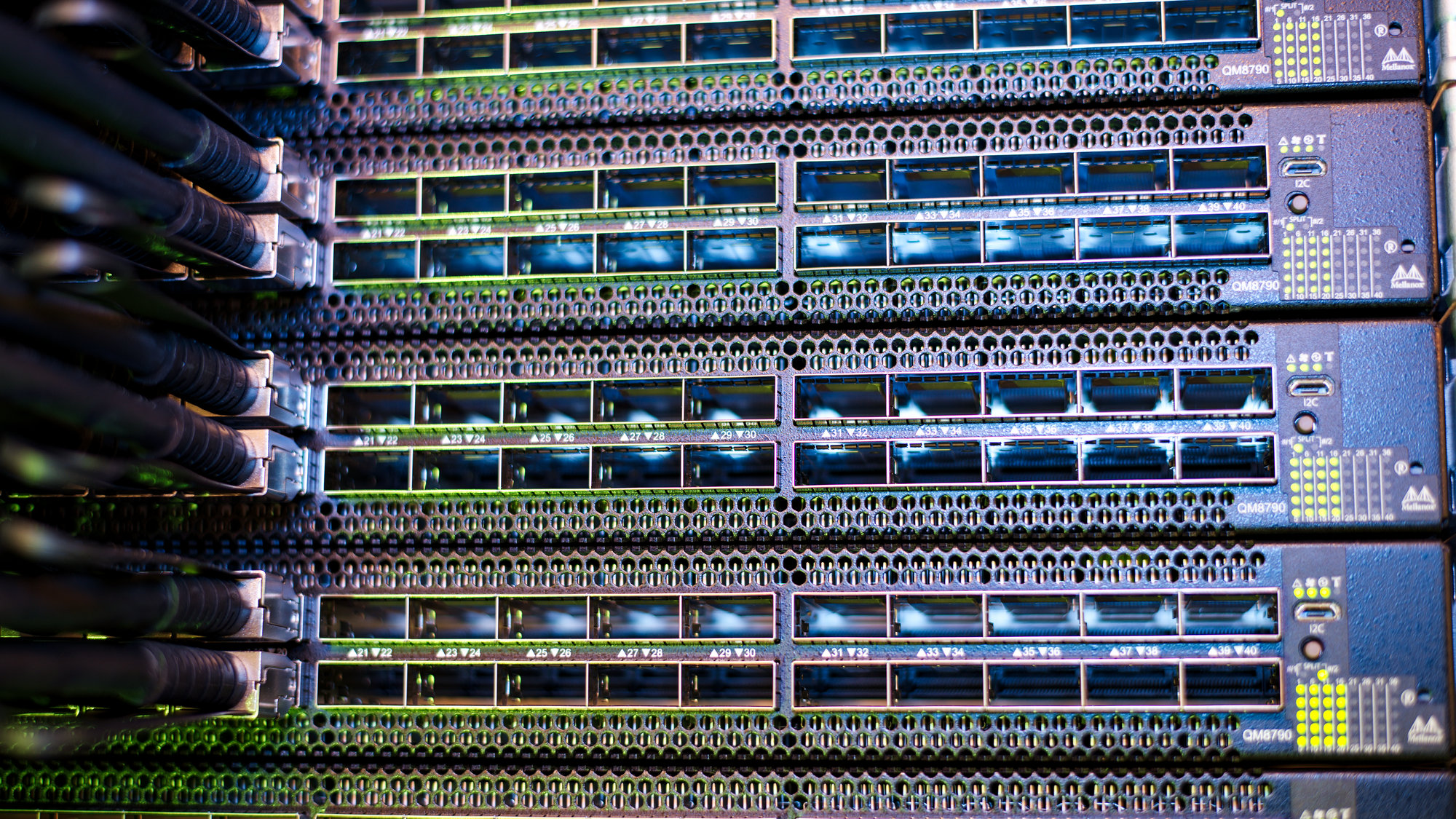

ForHLR II uses high-performance InfiniBand as its core communication network. Each network port can send and receive data at a speed of 100 Gigabits per second.

ForHLR II is about to be replaced by the much faster "Hochleistungsrechner Karlsruhe" (HoreKa). The new system is currently being installed and scheduled to go into operation in mid-2021.

Storage

Scientific calculations produce huge amounts of data. This data is stored on specialized parallel file systems designed to deliver extreme performance.

Scroll or swipe down to learn more.

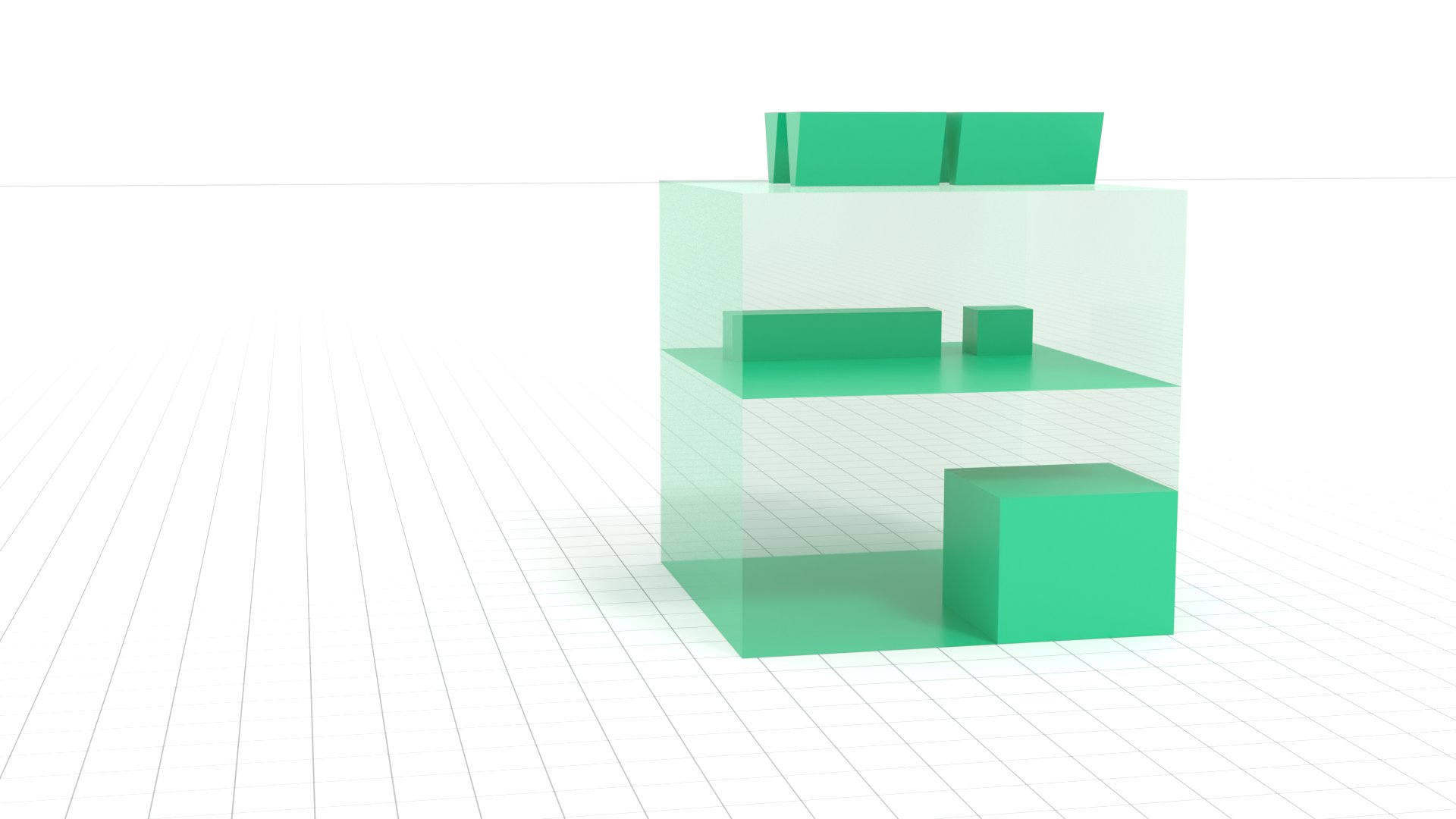

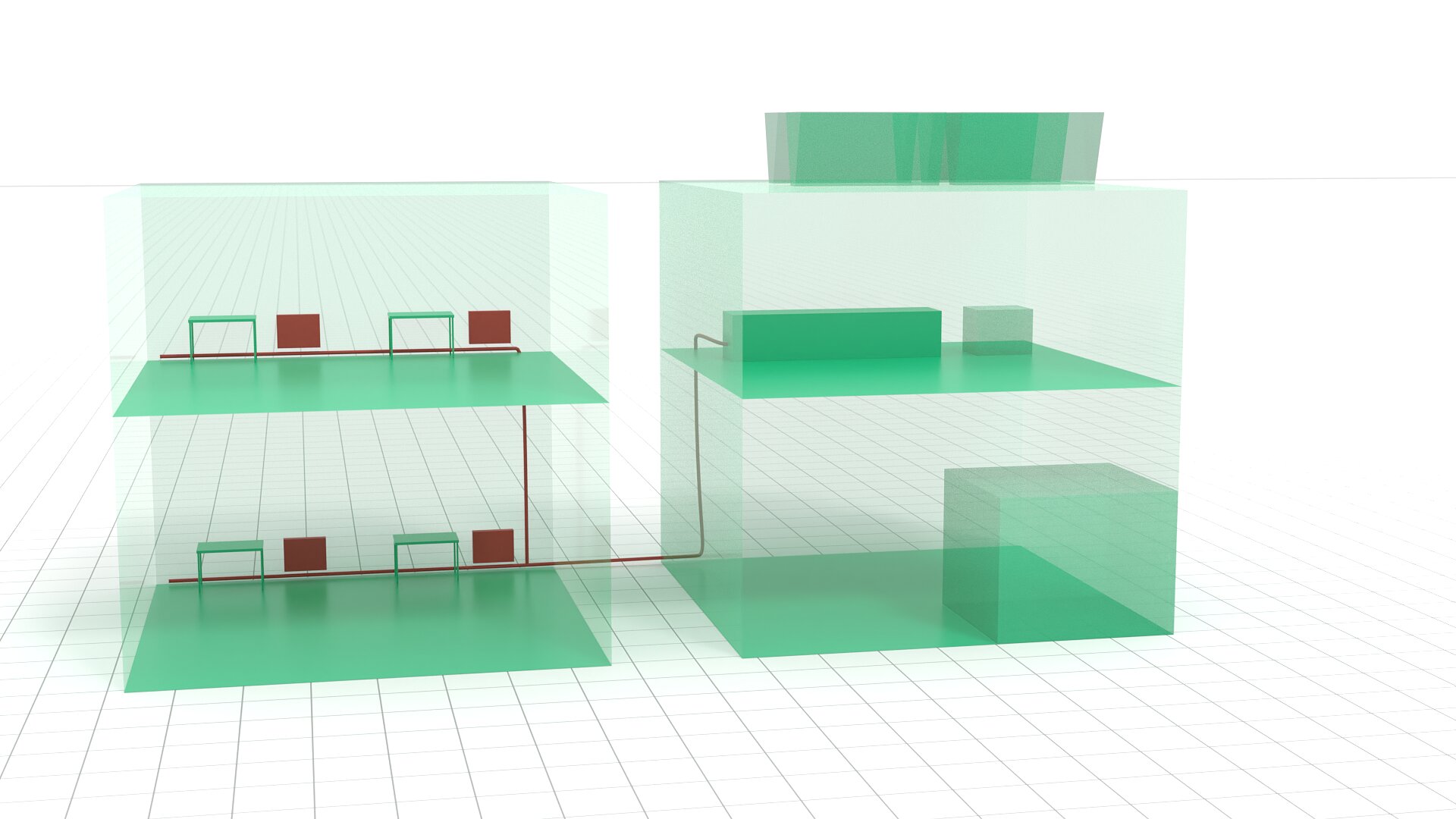

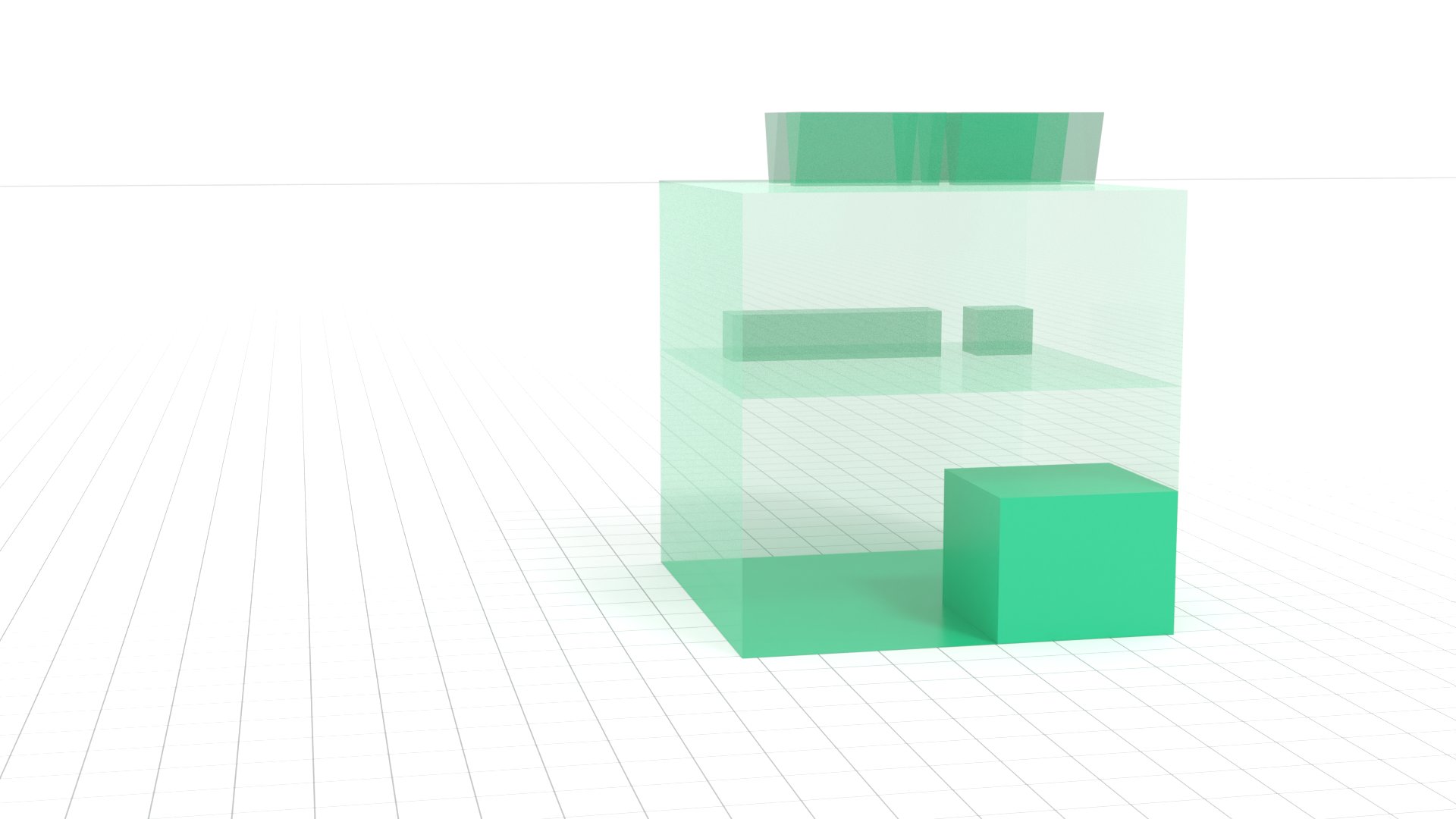

Storage Systems

ForHLR II uses a 5 Petabyte Lustre file system, while the file system for HoreKa pictured here can store more than 16 Petabytes. Thats's equialent to 16 million Gigabytes.

Parallel file systems consist of thousands of storage devices. Special software makes them appear like a single, big storage system to the users.

While Flash-based storage devices are becoming more important due to their high speeds, they are still much more expensive than magnetic hard drives when high capacities are needed.

This is why the 16 Petabyte HoreKa file system is still equipped with more than 1500 hard drives.

Cooling

Supercomputers need huge amounts of energy to perform their work. Nearly all of the energy is converted to heat, so the systems have to be cooled.

The data center housing the ForHLR II and future HoreKa super-computers uses an award-winning, highly efficient hot-water cooling concept.

Scroll or swipe down to learn more.

Cooling

ForHLR II relies on a direct-to-chip hot-water cooling system. Water enters into the nodes at a temperature of about 40°C, passes over all the components and returns to the cooling circuit at a temperatur at 45°C or above.

The node chassis are supplied with cooling water through water pipes connected to the racks.

Big pumps in the infrastructure room circulate tens of thousands of liters of hot water through the supercomputer system every hour.

Since it is rarely hotter than 35°C outside in Karlsruhe, the hot water transferred to the chillers cools down to less than 40°C without additional cooling.

Parts of the heat can be re-used for the heating of the adjacent office buildings.

The extremely energy-efficient cooling concept of the data center was awarded with the German Data Center Award in 2017.

Power

Supercomputers, storage and cooling require huge amounts of energy. ForHLR II has a peak power consumption of 500 kilowatts, the data center is designed for loads of up to 1 megawatt. Providing all data center components with electric energy is a complicated task.

Scroll or swipe down for more information about power distribution.

Power

An uninterruptible power supply with a capacity of 1.3 megawatts provides the supercomputers with stable power.

More than 800 batteries store enough energy to keep the whole datacenter running for more than 15 minutes at full load in case the external power grid should fail.