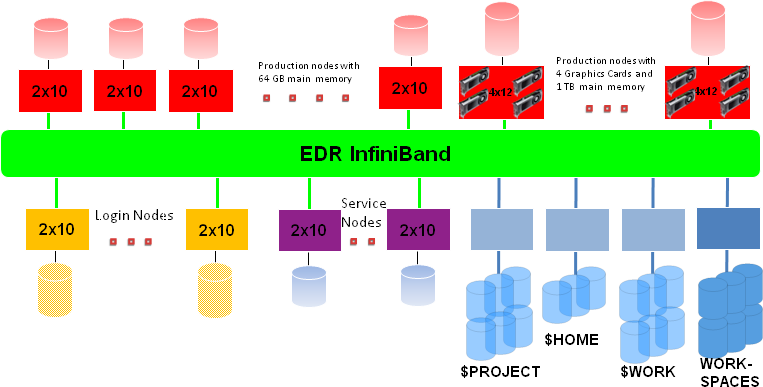

Configuration of the ForHLR II

The research high performance computer ForHLR II includes

- 5 login nodes with 20 cores each with 256 GB main memory per node,

- 1152 "thin" compute nodes, each with 20 cores with a theoretical peak performance of 832 GFLOPS (at 2.6 GHz) and 64 GB of main memory per node,

- 21 "fat" rendering nodes with 48 cores each with 4 NVIDIA GeForce GTX980 Ti graphics cards per node and 1 TB main memory per node

- and as connection network an InfiniBand 4X EDR Interconnect.

The ForHLR II is a massively parallel parallel computer with a total of 1186 nodes, of which 8 nodes are service nodes. This does not include the file server nodes. All nodes - except the "fat" rendering nodes - have a basic clock frequency of 2.6 GHz (max. 3.3 GHz). The rendering nodes have a basic clock frequency of 2.1 GHz (max. 2.7 GHz). All nodes have local memory, local disks and network adapters. A single "thin" computing node has a theoretical peak power of 832 GFLOPS, a single "fat" rendering node has a theoretical peak power of 1613 GFLOPS (each at the base clock frequency), resulting in approximately 1 PFLOPS of theoretical peak power for the entire system. The main memory across all compute nodes is 95 TB and all nodes are interconnected by an InfiniBand 4X EDR interconnect.

The base operating system on each node is a Red Hat Enterprise Linux (RHEL) 7.x. KITE is the management software for the cluster; KITE is an open environment for running heterogeneous computing clusters.

As a global file system, the scalable, parallel Lustre file system is connected via a separate InfiniBand network. The use of multiple Lustre Object Storage Target (OST) servers and Meta Data Servers (MDS) ensures both high scalability and redundancy in the event of failure of individual servers. After logging in, you will find yourself in the PROJECT directory, which is also the HOME directory, where the disk space approved by the steering committee is available for your project. In the WORK directory and in the workspaces 4.2 PB of disk space are available. In addition, each node of the cluster is equipped with local SSDs for temporary data.

Detailed short description of the nodes:

- 5 20-way (login) nodes, each with 2 Deca-Core Intel Xeon E5-2660 v3 processors with a basic clock frequency of 2.6 GHz (max. turbo clock frequency 3.3 GHz), 256 GB main memory and 480 GB local SSD

- 1152 20-way (computing) nodes, each with 2 Deca-Core Intel Xeon E5-2660 v3 processors (Haswell) with a basic clock frequency of 2.6 GHz (max. turbo clock frequency 3.3 GHz), 64 GB main memory and 480 GB local SSD,

- 21 48-way (rendering) nodes each with 4 12-core Intel Xeon E7-4830 v3 processors (Haswell) with a basic clock frequency of 2.1 GHz (max. turbo clock frequency 2.7 GHz) and 4 NVIDIA GeForce GTX980 Ti graphics cards, 1 TB main memory and 4x960 GB local SSD and

- 8 20-way service nodes, each with 2 Deca-Core Intel Xeon E5-2660 v3 processors with a basic clock frequency of 2.6 GHz and 64 GB main memory

A single deca-core processor (Haswell) has 25 MB L3 cache and operates the system bus at 2133 MHz, with each core of the Haswell processor having 64 KB L1 cache and 256 KB L2 cache.

Access to ForHLR II for project participants

Only secure procedures such as secure shell (ssh) and the corresponding secure copy (scp) are allowed when logging in or copying data to and from ForHLR II. The mechanisms telnet, rsh and other r-commands are disabled for security reasons. To log in on the ForHLR II, the following command should be used:

ssh kit-account ∂does-not-exist.forhlr2 scc kit edu or ssh kit-account ∂does-not-exist.fh2 scc kit edu

In order to gain access to the ForHLR II as a Project Participant(s), the relevant access form, which can be accessed via the http://www.scc.kit.edu/hotline/formulare.php website, must be completed, signed by the Project Manager and sent to the ServiceDesk.

Important information for the use of ForHLR II

- ForHLR User Guide (Wiki)

- Project proposals

Hotline by e-mail, telephone and ticket system

- mailto: fh-hotline ∂does-not-exist.lists kit edu

- +49 721 608-48011

- ticket system: https://bw-support.scc.kit.edu