-

KI-Toolbox

-

The KI-Toolbox offers KIT employees convenient, web-based access to the use of artificial intelligence (AI) via various large language models (LLM). Local models with maximum data protection sovereignty are available, which are operated exclusively at the SCC. In addition, models are available via Azure OpenAI for generic tasks without personal inferences and without using entered data for model training. The prerequisite for access to the AI toolbox is successful completion of the AI training course on KOALA.

- Contact:ki-toolbox@scc.kit.edu

Access requirements

Access is possible for KIT employees, KIT students (from April 2026) and persons with a guest and partner account.

Access to the KI-Toolbox requires the successful completion of the self-study course "Understanding and Applying Generative AI" on KOALA (employees) or the ILIAS learning platform (students). After completing the course and logging into the KI-Toolbox again, access will be activated automatically.

Before using the KI-Toolbox, please read the terms of use and the privacy policy.

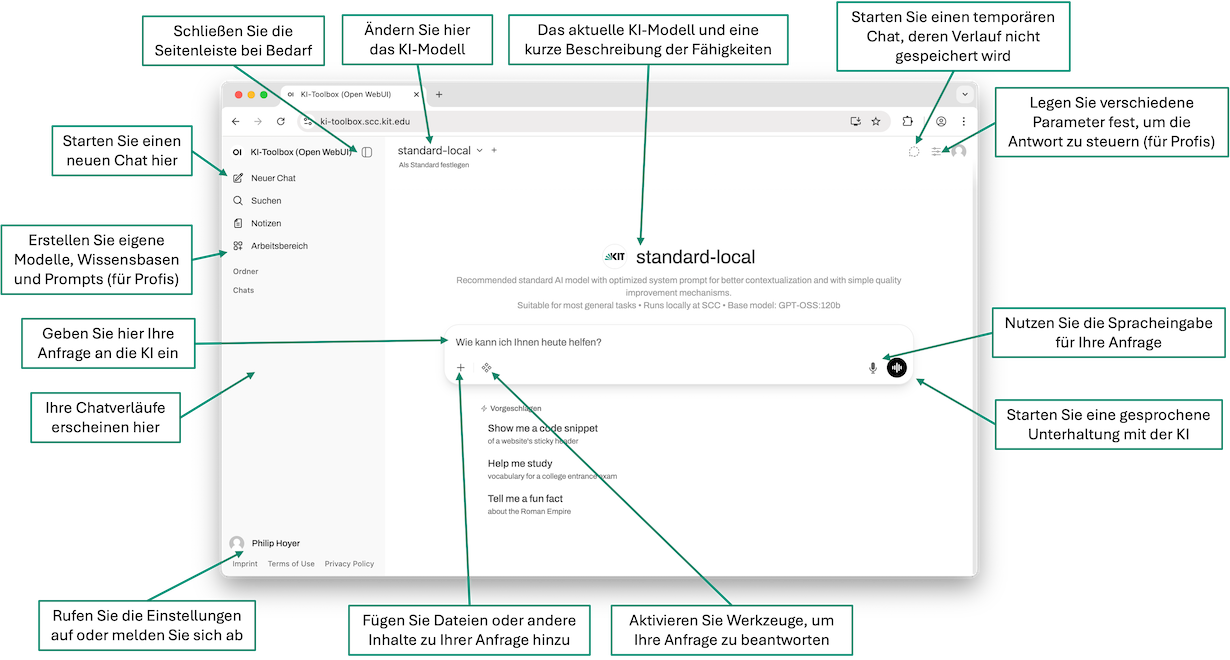

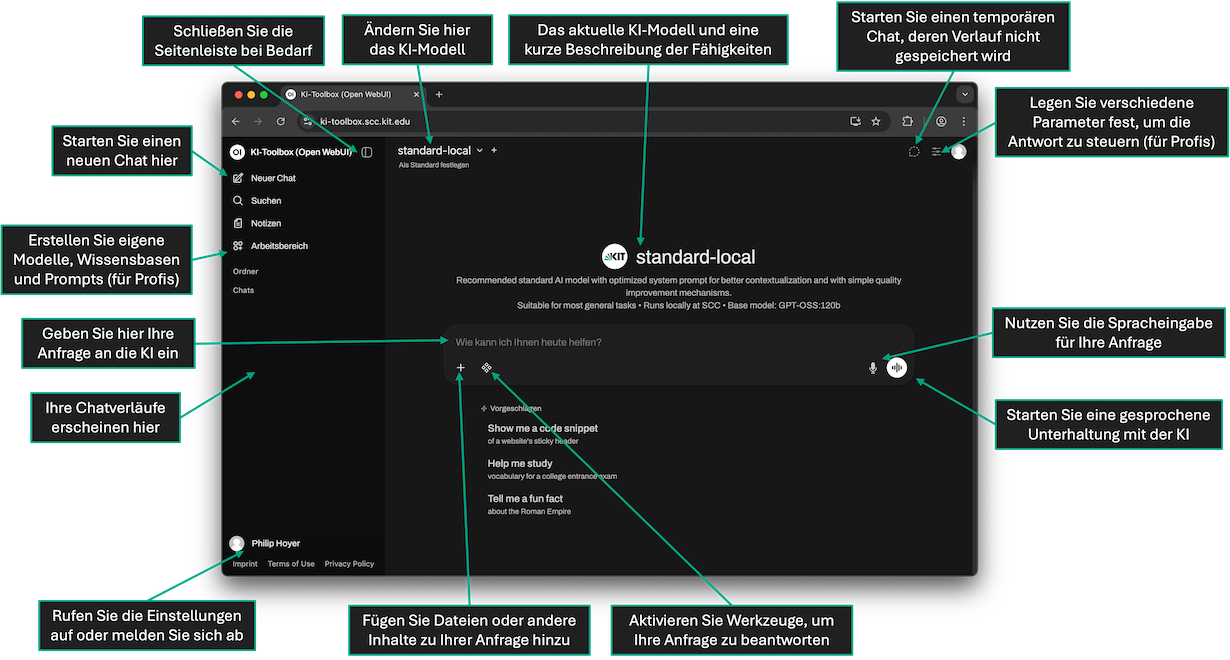

Quick start

- Please first complete the self-study course "Understanding and applying generative AI" on KOALA (employees) or on the ILIAS learning platform (students). Successful completion of the course is a mandatory prerequisite for accessing the KI-Toolbox.

- Go to https://ki-toolbox.scc.kit.edu in your browser, click on "Continue with KIT account" and log in.

- If applicable, accept the privacy policy and terms of use (only for the first login)

- If necessary, create a new chat history by clicking on "New chat" in the left sidebar.

- If necessary, change the desired AI model by opening the dropdown for model selection at the top of the page.

- Enter your request in the middle of the page ("How can I help you today?") and press Enter to submit.

AI models

There are currently various AI models available for selection in the KI-Toolbox. Local models will be hosted at the SCC, while external models are currently sourced from the Azure cloud in European data centers.

Standard models

For users with little experience in dealing with AI models, we provide two models with an optimized system prompt:

- We recommend trying out the "Standard-Local" model first. This uses the local model "GPT-OSS 120B" and can therefore also be used for handling personal data.

- For more complex tasks without personal data, the "Standard-External" model can be used, which uses the OpenAI model "GPT-5 mini" from the Azure Cloud.

List of all models

| Model ID | Model ID | Host | Cost 1M token | Intelligence | Intelligence Speed | Hints |

|---|---|---|---|---|---|---|

| GPT-OSS 120B | kit.gpt-oss-120b | KIT | - | medium | very high | |

| Mixtral 8x22B Instruct | kit.mixtral-8x22b-instruct | KIT | - | medium | high | (1) |

| Mistral Small 4 119B | kit.mistral-small-4-119b | KIT | - | medium | very high | |

| Gemma 4 31B IT | kit.gemma-4-31b-it | KIT | - | medium | medium | |

| Qwen3.5 397B A17B | kit.qwen3.5-397b-A17b | KIT | - | high | high | |

| MiniMax M2.5 229B | kit.minimax-m2.5-229b | KIT | - | high | high | (1) |

| MiniMax M2.7 229B | kit.minimax-m2.7-229b | KIT | - | high | high | |

| GPT-4.1 | azure.gpt-4.1 | Azure (EU) | 2.20 in, 8.80 out | high | medium | (2) |

| GPT-4.1 mini | azure.gpt-4.1-mini | Azure (EU) | 0.44 in, 1.76 out | medium | high | (2) |

| GPT-4.1 nano | azure.gpt-4.1-nano | Azure (EU) | 0.11 in, 0.44 out | low | very high | (2) |

| GPT-5 |

azure.gpt-5 |

Azure (EU) | 1.38 in, 11 out | high | medium | |

| GPT-5 mini | azure.gpt-5-mini | Azure (EU) | 0.28 in, 2.20 out | medium | high | |

| GPT-5 nano | azure.gpt-5-nano | Azure (EU) | 0.06 in, 0.44 out | low | very high | |

| GPT-5.1 | azure.gpt-5.1 | Azure (EU) | 1.38 in, 11 out | very high | medium | |

| o3 | azure.o3 | Azure (EU) | 2.20 in, 8.80 out | high | low | (2) |

| o4-mini | azure.o4-mini | Azure (EU) | 1.21 in, 4.84 out | medium | medium | (2) |

Notes:

(1) The local models "Mixtral 8x22B" and "MiniMax M2.5" will be replaced by "Mistral Small 4" and "MiniMax M2.7" by the end of April 2026.

(2) Newer OpenAI models ("GPT-5.2" - "GPT-5.4") are currently not yet available in Azure as "Data Zone Deployment" in the EU region. As soon as these are available, older models (GPT-4.1, o3 and o4-mini) will be deactivated in the KI-Toolbox.

(3) Further external models via the Google Cloud ("Gemini" and "Claude") are planned and can be made available as soon as the legal clarifications have been completed.

Budget

Please note that a maximum budget of USD 25 per user per month is currently available for the use of the external models.

If the budget is exceeded, a corresponding error message is displayed when using an external model ("ExceededBudget: End User=xy1234 ∂does-not-exist.kit edu over budget. Spend=xxx.xxxxxxx, Budget=25.0"). The budget is reset at 0 o'clock (UTC) at the beginning of the month. The local models are not counted towards the budget and use is still possible even if the budget is exceeded.

Unfortunately, it is not yet possible to view the current budget or increase it individually. We are working on it...

API key

The AI models can also be used via an API (OpenAI-compatible interface). You can generate your personal API key yourself in the KI-Toolbox via "Settings" (bottom left via the menu) -> "Accounts" -> "API key" (click on "Show" if necessary). Please treat your API key like a personal password and protect it from unauthorized access. If you suspect that your API key may have been compromised, regenerate it immediately using the KI-Toolbox.

For external tools, the base URL for the API is usually "https://ki-toolbox.scc.kit.edu/api". You can find the model ID in the table above or by displaying the tooltip in the KI-Toolbox with the mouse pointer over the model selection.

Further information on using the API can be found in the ZML instructions.

Service accounts

To use the KI-Toolbox in chatbots or other third-party applications that require permanent API access, we recommend using a non-personal API key via a KIT Service Account. You can apply for a service account at the SCC ServiceDesk via the ticket system. Please then register the exact account at ki-toolbox ∂does-not-exist.scc kit edu so that it can be activated for use of the KI-Toolbox.

Groups

With the help of groups, various artifacts (models, knowledge, notes, prompts, etc.) can be shared with several people to enable joint editing or use. You cannot create groups directly in the KI-Toolbox, but group synchronization is possible via the SCC group management with the login. Specifically, groups from the SCC group management with the name in the form "<OE>-ki-toolbox-<name>" are automatically created in the KI-Toolbox when a person who is a member of this group logs in and the group membership of this person is updated (i.e. new groups are created, a new group membership is added and group memberships that no longer exist are removed).

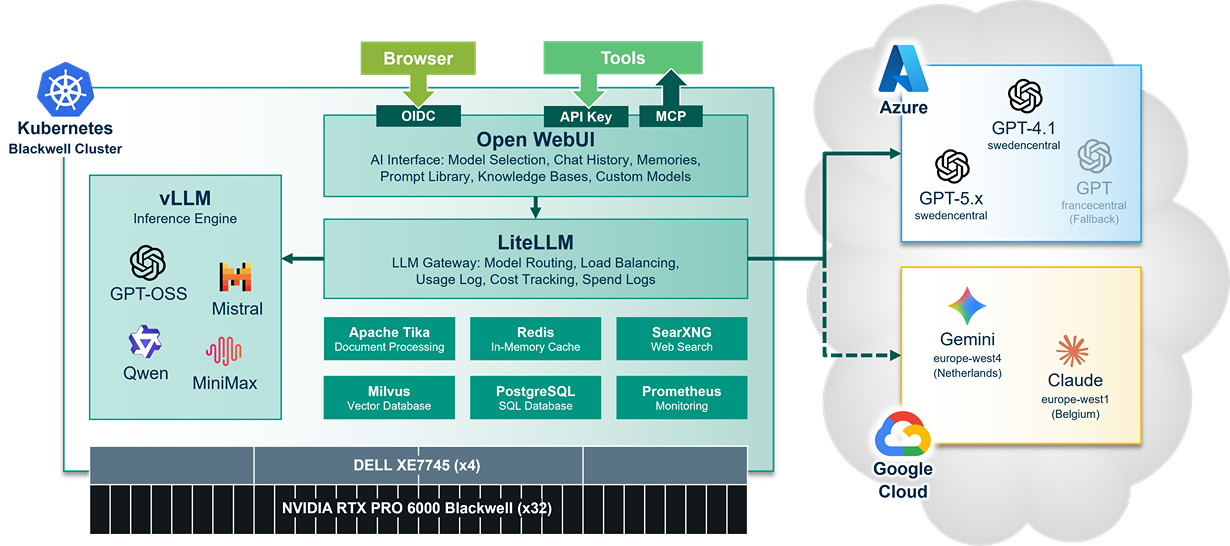

Hardware and software

The software stack of the KI-toolbox consists exclusively of open source software and runs in a Kubernetes environment on 4 nodes (DELL XE7745) at the SCC. Each node is equipped with 8 NVIDIA RTX6000 PRO Blackwell GPU cards. Open WebUI is used as the front end, while LiteLLM is used for load balancing, cost management and logging. The local AI models are operated with vLLM and LMCache and loaded from the local S3 at SCC.